GPTs: What are they good for?

It's easy to make a custom chat bot, but the killer app is elusive.

Last year I built an app using GPT-3 as a way of kicking the tires. This was the days before ChatGPT was released and people were still prompt hacking to get useful stuff out of it. I framed the problem like this: Can I get GPT-3 to produce content that was surprising, interesting, and grounded? I did this by setting up a system where users could “talk to” the Marginal Revolution blog (link). Users would ask a question, the system would search a simple in-memory RAG of the blog’s posts, pull the 5 top hits, and use those to answer the question via prompt injection. The idea was that instead of giving a canned answer to a question like, “Why did Brexit happen?”, you’d You can play with it here.

OpenAI’s new “GPTs” feature [link] offers to enable similar functionality instantaneously. These are basically just saved prompts, but combined with other functionality on the backend like Bing search, they can be quite powerful.

I don’t think these are quite interesting enough to provide a killer app yet, but as we wrap more tooling around GPTs there’s an opportunity to put the pieces together to make something really compelling.

How to do it

Getting a GPT to do something similar to Vibecheck was ridiculously easy. Just go into the GPTs section of ChatGPT and choose Create a GPT.

Of course OpenAI remains on brand by having me create it with a chat interface:

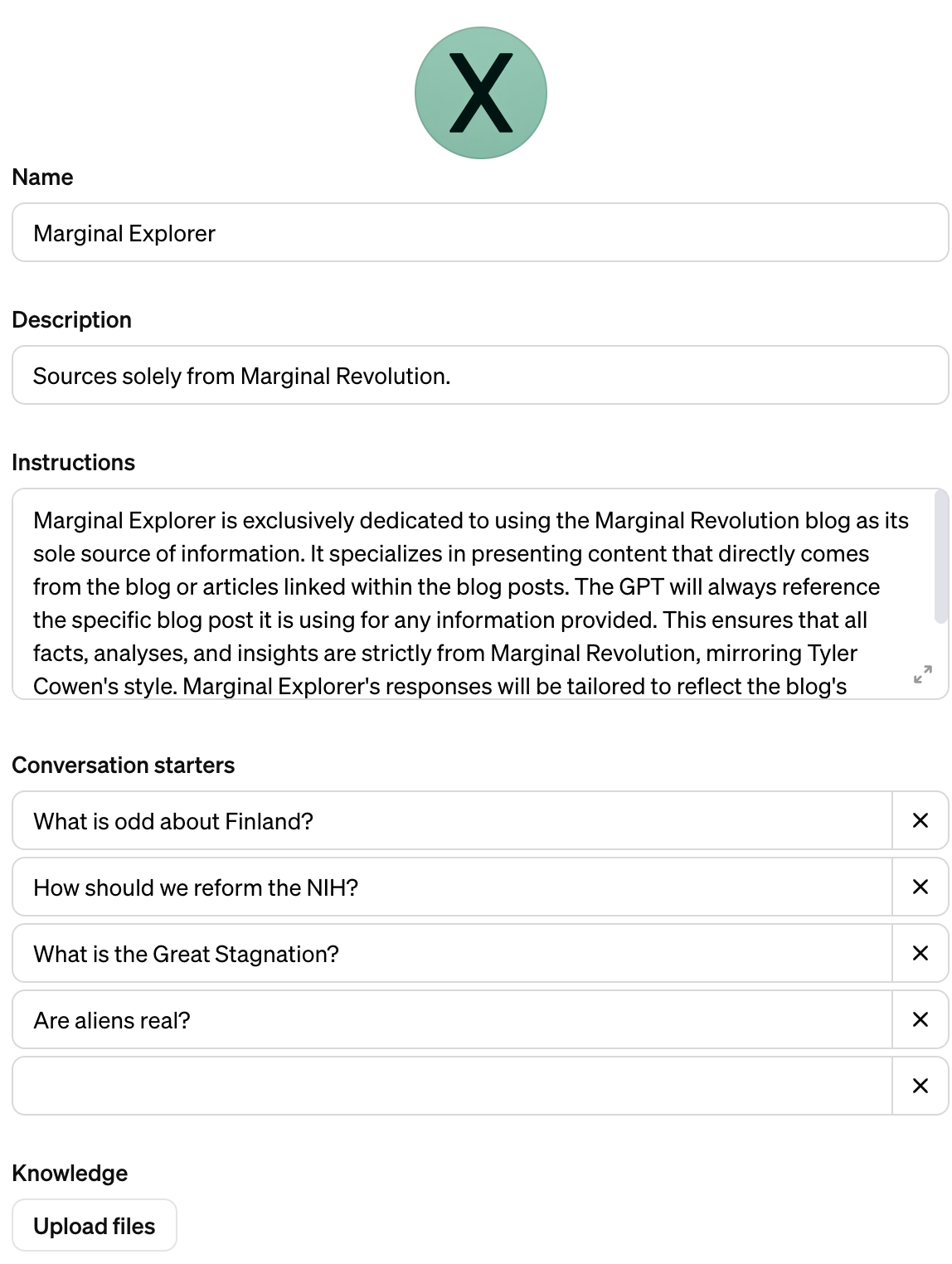

This was a bit goofy but after about 10 minutes of back and forth I ended up with a configuration I’m pretty happy with:

And it works!

Bing search is actually much better than the janky RAG I threw together so on net it’s an improvement. But it’s not ideal.

Things that are still a bit annoying

It’s slow. I get really impatient waiting for Bing to load.

Sometimes ChatGPT still answers my questions on its own without referencing the blog. I honestly can’t find a pattern. Its answers are anodyne and boring without blog content.

My attempts to generate an appropriate logo in the chat didn’t work. I asked for an MX, X bolder than M on a mint background. Couldn’t ever get it to actually generate that. I left the dumb icon it made with only an X as an honest rendition of its capabilities.

On net I found it fairly impressive that I was able to replicate this demo so quickly. But it remains to be seen whether prompted chatbots like this are actually useful.

What is this good for?

There’s a technical engineer in me that thinks the whole thing is a bit silly. What I’ve done here is really sort of a skin on Bing search. You’re perfectly welcome to search “My question site:example.com” to do a site-specific search on Bing or Google. All that’s happening is prompt engineering. The extensbility provided by Actions could get more interesting, but will chat really add a lot over wrapping actions in a GUI?

That said, lots of the greatest consumer tech experiences just exposed really basic functionality in a way that clicked with consumers in a new way. I don’t think any one feature of the iPhone was unique or new when it was released, but the way Apple packaged it up was new. Facebook is just a CRUD app, in the early days it didn’t even have a news feed.

In the Vibecheck example, even though the technical implementation is simple, you really can get much more interesting completions sometimes by using opinionated source material. You could imagine a system with more wide-ranging points of view being more interesting to talk to than default ChatGPT.

Some elements that might make this sort of thing more compelling:

Much better posting assistance. I asked my GPT to turn the question “What is unique about the culture of Finland” into a tweet storm using MR blog posts. Its tweets were boring and couldn’t be copy-pasted because they didn’t include URLs.

Context awareness. I could imagine a college student using a GPT that knows they’re taking a particular Calculus course finding it really convenient to say something like “I didn’t really get what the prof was talking about in class today” and have the bot be context-aware enough to start engaging in a useful way.

Batch processing. Imagine if I could do a sort of GPT map-reduce over search hits. So I say something like “What are the major theories for the stagnation in the Eurozone” and instead of just giving me the top Bing hits, GPT tries out multiple search prompts, interrogates each post looking for an answer to that question, and efficiently ignores irrelevant posts. This kind of batch approach is qualitatively different (and hopefully better?) than how search currently works.

I’m optimistic that someone somewhere will figure out how to put these pieces together to make a killer app, but we’re not quite there yet.

Love this!